Help EFF Solve an Issue That's Bigger than Creepy Ads

[link]

Deeplinks

May 13 2026 at 05:10 PM

Millions of people around the world use EFF's Privacy Badger. This browser exten...

Millions of people around the world use EFF's Privacy Badger. This browser extension blocks the hidden trackers that twist your web browsing into a commodity for Big Tech, advertisers, scammers, and data brokers. But did you know that we’re trying to solve an issue that’s even bigger than creepy ads and user profiling? You can help.

Online tracking isn't just creepy and unethical. It also enables government surveillance. Widespread commercial surveillance and weak privacy laws allow data brokers to harvest your data and sell it to law enforcement agencies including the FBI, CBP, and ICE. The government exploits this system to buy sensitive information about you that they would ordinarily need a warrant to collect, like your location over time.

With your help, EFF is fighting back. Our team is working to enact stronger laws to uphold your privacy. We’re advocating for consumer rights in the courts. We’re investigating how these technologies affect our communities. And we’re cutting off surveillance advertising at the source with tools like Privacy Badger for everyone. You can support this work as an EFF member.

End Mass Surveillance

Privacy is a human right because it gives you a fundamental measure of security and freedom. That is why we at EFF focus on your ability to have private conversations and interact with the world using technologies that you choose. But when tools that many of us must rely on serve corporate surveillance, they also feed government surveillance. We owe it to ourselves to fight the mass spying used to control and intimidate people. Let’s do this.

For a limited time, you can join EFF as a monthly or one-time donor and pick up a new Privacy Badger Crewneck sweatshirt. The embroidered Privacy Badger mascot appears above Traditional Chinese for "privacy” because human rights are universal.

You can also get a set of puffy stickers as a token of thanks. Our little Ghostie protects privacy in Arabic, English, Japanese, Persian, Russian, and Spanish.

Claw Back! This year’s member t-shirt is hot off the press featuring an orange cat swatting at the street-level surveillance equipment multiplying in our communities. You might empathize with him, but there’s a better way. Let’s end the law enforcement contracts, harmful practices, and twisted logic that enable mass spying in the first place.

You can support our mission for technology in the public interest today. Join the movement and become an EFF member.

____________________

EFF is a member-supported U.S. 501(c)(3) organization. We've received top ratings from the nonprofit watchdog Charity Navigator since 2013! Your donation is tax-deductible as allowed by law.

The Science is Not Settled: How Weak Evidence is Fueling a National Push to Ban Social Media for Youth

[link]

Deeplinks

May 13 2026 at 04:48 PM

As statehouses ramp up for 2026, we’re seeing a familiar and concerning trend of...

As statehouses ramp up for 2026, we’re seeing a familiar and concerning trend of lawmakers rushing to regulate the internet based on shockingly shaky science. From the California State Assembly to the Massachusetts and Minnesota legislatures, a wave of bills is crashing against the digital lives of young people, with proponents of these measures framing social media access as a "public health epidemic," or a "mental health crisis," even though we have yet to see any of the settled science that those labels usually invoke.

As a digital rights organization dedicated to the civil liberties of all users, EFF’s expertise lies in reminding lawmakers that young people enjoy largely the same free speech and privacy rights as adults. EFF is not a social science research shop, but we can read the emerging research. What that research shows is much more nuanced than what is claimed by those proposing to ban young people from social media, and it is clear that research and theories used to justify these sweeping bans is far from settled. The rush to ban access to digital platforms is being fueled by "pop psychology" narratives and a collection of statistically flawed studies that do not meet the rigorous standards required for such a massive infringement on youth autonomy and constitutional rights.

The Lie of A "Settled" Consensus

The current legislative push relies heavily on a specific, media-friendly narrative that the "great rewiring" of the adolescent brain is a proven fact. This theory suggests that smartphones and social media are the primary, if not sole, drivers of a global uptick in teen anxiety, depression, eating disorders, self harm, etc. While this narrative makes for a compelling airport-bookstore read, it quickly collapses under the scrutiny of the broader scientific community.

Independent researchers, including developmental psychologists from institutions like the University of California, Irvine, and Brown University, have repeatedly found that the evidence for such claims is mixed, blurry, and often contradictory. Large-scale meta-analyses covering dozens of countries have failed to show a consistent, measurable association between the rollout of social media and a decline in global well-being. In reality, we are seeing a classic case of what many of our middle school science teachers warned us about: "correlation" being sold as “causation."

Additionally, the studies used to support these measures often fail to account for or exclude significant alternative explanations for rising teen anxiety and depression, such as the lasting impact of pandemic-era isolation, the persistent threat of school gun violence, and mounting economic or climate-related stress. By focusing narrowly on social media, these findings frequently overlook the broader societal factors that also impact youth mental health.

The Cult of the "Anxious" Expert

The current push for blanket social media bans relies almost exclusively on the work of Jonathan Haidt, particularly his book The Anxious Generation. While Haidt is an amiable and brilliant storyteller, he is not a clinical psychologist or a specialist in child development. He is a social psychologist who writes about moral psychology at a business school. Nonetheless, the book has made it to every Best Seller list, and with Haidt revered as an expert on podcasts with massive reach, like Oprah, Joe Rogan, Michelle Obama, and Trevor Noah—his message has been heard by a large subset of society, which primarily relies on: no smartphones or social media before age 16, phone-free schools, and more “unsupervised, real-world independence.”

To highlight Haidt’s reach when it comes to legislation banning social media: the California committee analysis for the proposed California social media ban mentions Haidt 20 times; the Governor of Utah promoted the book as a “must-read” months before signing the nation’s first social media ban; Haidt is cited in bill analysis for the bill banning social media in Florida; his work is mentioned in a federal bill aiming to ban phones in schools; and he provided formal testimony before the U.S. Senate Judiciary Committee (Subcommittee on Technology, Privacy, and the Law) in May 2022.

While Haidt’s research has been paramount to legislation stripping millions of young people of their rights to expression and connection, his conclusions are not without challenge, and many experts in the field argue that the evidence is less than ironclad.

The “Bad Science” Fueling Social Media Bans

While we can admit that Jonathan Haidt’s "great rewiring" theory makes for a gripping narrative, we cannot ignore that independent researchers and statisticians have identified significant flaws in the data used to justify it. Which means we are currently watching policymakers legislate blanket bans based on evidence that would be rejected in almost any other field of public health.

The reality is that research has consistently disproven the oft-assumed link between social media use and poor mental health in youth, and actually indicates that moderate internet use is a net positive for teens’ development, and negative outcomes are usually due to either lack of access or excessive use. In one major study of 100,000 adolescents, a “U-shaped association emerged where moderate social media use was associated with the best well-being outcomes, while both no use and highest use were associated with poorer well-being.” We also know that young people’s relationship with social media is complex, as it provides them essential spaces for civic engagement, identity exploration, and community building—particularly for LGBTQ+ and marginalized youth who may lack support in their physical environments.

But again, the image Haidt presents in his book is increasingly at odds with the broader academic consensus. As mentioned, critics argue that the evidence for the mental health impacts of social media is mixed, blurry, and often misinterpreted. NYU statistics expert Aaron Brown, writing for Reason, notes that many of the studies in Haidt’s exhaustive reference list are statistically unreliable or fail to show a strong causal link. Prof. Candace Odgers, a leading voice in psychological science, explains the "selection effect" that legislators often ignore:

“Hundreds of researchers, myself included, have searched for the kind of large effects suggested by Haidt. Our efforts have produced a mix of no, small and mixed associations. Most data are correlative. When associations over time are found, they suggest not that social-media use predicts or causes depression, but that young people who already have mental-health problems use such platforms more often or in different ways from their healthy peers.”

This raises a fundamental question of legislative responsibility: If the science is not settled, how can legislators confidently declare a “public health crisis” to justify stripping away young people’s First Amendment rights? By bypassing the rigorous, nuanced findings of the scientific community in favor of a more convenient narrative, legislators are choosing emotion over evidence. Before imposing such draconian restrictions on young people’s access to information, policymakers have an obligation to do the heavy lifting: to dig into the actual research and listen to the experts who are sounding the alarm on oversimplified conclusions.

The Dangers of "Social Contagion" Narrative

Perhaps the most troubling aspect of Haidt’s crusade is its overlap with ideological rhetoric that pathologizes the identities of marginalized youth, and how that makes its way through efforts to ban social media for youth. A recurring theme in the literature favored by proponents of social media bans is the idea of "social contagion"—specifically regarding the rise in young people identifying as transgender or non-binary. Haidt dedicates an entire chapter of his book to this (ch.6, pt 3, p. 165), talking about “Why Social Media Harms Girls More Than Boys,” stating that:

“The recent growth in diagnoses of gender dysphoria may also be related in part to social media trends, [...] the fact that gender dysphoria is now being diagnosed among many adolescents who showed no signs of it as children all indicate the social influence and sociogenic transmission may be at work as well.”

These harmful theories suggesting that social media is "infecting" young people with gender dysphoria are false and not supported by peer-reviewed clinical research. But by legitimizing "experts" who promote these debunked theories, legislators—especially those in states like California who pride themselves on being a sanctuary for LGBTQ+ youth—are inadvertently platforming the same rhetoric used in other states to ban gender affirming care for youth. This "social contagion" narrative is a tool of exclusion, not a scientific reality, and we must be wary of any "public health" argument that treats community-building and self-discovery among marginalized young people as a "purported mental illness" spread via TikTok.

A Better Path: Digital Wellness, Not Bans

Fortunately, there is a measured, evidence-based alternative already emerging. California's A.B. 2071, for instance, is a student-authored "digital wellness" bill that offers a measured, evidence-based alternative rather than prohibition. The bill advocates for a curriculum that teaches students how to manage algorithms, recognize cyberbullying, and regulate their own relationship with technology. Instead of trying to completely shield young people from social media, education-based approaches empower young people and have the benefit of providing skills that stay with a young person long after they leave the classroom.

JustLeadershipUSA, a criminal justice organization, has a slogan that rings true in this instance too: “Those closest to the problem are closest to the solution.” So let’s start listening to what our young people are asking us for—more education—instead of imposing paternalistic, disempowering bans.

Legislating With Precision instead of Emotion

Adolescent mental health struggles are a complex, multifaceted crisis. It is a crisis that has existed for as long as time, and has been driven by economic instability, the opioid epidemic, the threat of school violence, amongst other issues. To pin all of society's woes on a smartphone app is not just a scientific error; it is a policy failure that ignores the real, material needs of young people both online and off.

Legislators must stop legislating as "anxious parents" and start acting as measured policymakers. Because for some youth, social media platforms are a lifeline. UNICEF and other global human rights organizations have warned that age-related restrictions and blanket bans can backfire in three critical ways: isolating marginalized youth (like LGBTQ+ youth, students in rural areas, foster youth, or those with disabilities) who social media is often the only place they can find a supportive community; necessitating invasive mass collection of biometric data or government-issued IDs from all users, including adults; and pushing young people toward less-regulated, "darker" corners of the web where content moderation is non-existent and the risks of actual exploitation are significantly higher.

Legislators have a valid interest in protecting children, but that interest must be pursued through tailored, measured approaches. We cannot allow emotions or a collection of flawed data sets to justify a historic rollback of digital rights.

Broken Promises: RIP Instagram’s End-to-End Encrypted DMs

[link]

Deeplinks

May 12 2026 at 10:11 PM

Last week, Instagram ended its opt-in, and therefore rarely used, end-to-end enc...

Last week, Instagram ended its opt-in, and therefore rarely used, end-to-end encryption feature. Years after publicly promising to provide the privacy protections of end-to-end encryption across its platforms by default, it instead gave up on that technical challenge. Now, we've all lost an option for safer conversations on one of the biggest social media platforms in the world.

In an announcement in 2023, Meta bragged about how it had successfully encrypted Messenger, and teased that Instagram was in progress. Even before then, they’d talked about how important encryption was in Messenger and Instagram in a white paper published in 2022, stating:

We want people to have a trusted private space that’s safe and secure, which is why we’re taking our time to thoughtfully build and implement e2ee by default across Messenger and Instagram DMs.

So where did the reversal come from? In a statement, Meta claimed that, “Very few people were opting in to end-to-end encrypted messaging in DMs.” This isn’t all that surprising, as turning it on was an optional four-step process that few people knew about. Defaults matter, and Meta’s choice to blame people for failing to opt into this feature is proof of how much. In that same statement, the company pointed people to WhatsApp for access to encrypted messaging. Yet if Meta truly wanted people to have a trusted private space to communicate, it would meet them everywhere they are: on WhatsApp, on Messenger, and on Instagram.

But at least Meta was straightforward about the fact that it will not continue to support or work on this feature. That's rare. Most tech company promises aren’t broken explicitly, they just remain undelivered long enough to be forgotten.

This is particularly disappointing as other companies take even bigger swings, like Google and Apple working together to implement end-to-end encryption over Rich Communication Services (RCS), and Signal’s continued work to make its app simpler and easier to use for everyone.

Meta abandoning this principle is disheartening, especially as we are still waiting for other promised features from the company, like end-to-end encryption in Facebook Messenger group messages. Instead of blaming users for not using these sorts of features and then abandoning the promise of delivery, Meta—and other tech companies—should start by enabling strong privacy protective features by default.

Victory! End-to-End Encrypted RCS Comes to Apple and Android Chats

[link]

Deeplinks

May 12 2026 at 04:48 PM

This week, Apple released iOS 26.5, an update that supports end-to-end encryptio...

This week, Apple released iOS 26.5, an update that supports end-to-end encryption for Rich Communication Services (RCS), meaning conversations between Android and iPhone will soon be encrypted in the default chat apps. This has been a long time coming, and is a welcome delivery on a promise both Google and Apple made.

With this update, conversations that take place between Apple’s Messages app and Google Messages on Android will be end-to-end encrypted by default, as long as the carrier supports both RCS and encrypted messages (you can find a list of carriers here). RCS messages are a replacement for SMS, and in 2024 Apple started supporting it, making for a marked improvement in the quality of images and other media shared between Android and iPhones.

Now, those conversations can also benefit from the increased privacy and security that end-to-end encryption offers, making it so neither Google, Apple, nor the cellular carriers have access to the contents of messages. This feature comes courtesy of both Apple and Google supporting the GSMA RCS Universal Profile 3.0, which implements the Messaging Layer Security protocol for encryption. Metadata will likely still be collected and stored for these conversations, making alternatives like Signal still a better option for many conversations. Likewise, if you back up those conversations to the cloud, they may be stored unencrypted unless you enable Advanced Data Protection on iOS (Google Messages end-to-end encrypts the text of messages in backups, but not the media, so we’d like to see a similar offering as ADP on Android). Still, this is a significant step forward for the privacy of millions of conversations worldwide.

End-to-end encrypted RCS messaging is still marked as beta on Apple devices, likely because the rollout is dependent on carriers as well as the Android phone running the most recent version of Google Messages.

It might take some time before you get this feature in your chats and until you do, remember that the conversations are not protected with end-to-end encryption. But once everyone in the conversation is on the right software version and the carrier support is implemented, you will see a lock icon and the text, “Encrypted” at the top of the conversation for any chats you have over RCS, as seen here:

We applaud Apple and Google for getting this across the finish line and Encrypting It Already! More companies should take these sorts of difficult but necessary steps to protect the privacy of our conversations and our data.

EFF Launches New Offline Campaign for Saudi Wikipedian Osama Khalid

[link]

Deeplinks

May 12 2026 at 04:41 PM

Osama Khalid was just twelve years old when he began contributing to Wikipedia A...

Osama Khalid was just twelve years old when he began contributing to Wikipedia Arabic. In the height of the blogging era, he became a prolific blogger, publishing writings on his home country of Saudi Arabia, meetups he attended, and his opinions and observations about open source technology and freedom of expression. He advocated for internet freedom, contributed time and translations to various projects—including EFF’s HTTPS Everywhere—and was a thoughtful presence at the conferences he attended around the world…all while training to become a pediatrician.

In July of 2020, he was detained amid a wave of arbitrary arrests carried out by the Saudi authorities during the Covid-19 lockdown and initially given a five-year prison sentence. That sentence was later increased on appeal to 32 years, then reduced in 2023 to 25 years, and again to 14 years this past September. In a joint letter that we signed on to in April, the Saudi human rights organization ALQST, which has been leading the campaign for Osama’s release, wrote: “The huge discrepancy between sentences handed down at different stages in the case underscores the arbitrary manner in which sentencing is carried out in the Saudi judicial system.”

So, what was his “crime”? Sharing information online that conflicted with official narratives. Osama’s Wikipedia contributions included pages on critical human rights issues in Saudi Arabia, including the treatment of women’s rights activist Loujain al-Hathloul (herself an EFF client) and Saudi Arabia’s infamous al-Ha’ir prison. His blog, which has since been taken offline, included articles such as one criticizing government plans for the surveillance of encrypted platforms.

Over the years, we’ve campaigned for the release of a number of individuals imprisoned for their speech. Our contributions to the campaigns of Ola Bini, the Swedish software developer who has been targeted by the government of Ecuador for the past seven years, and Alaa Abd El Fattah, have had real impact. These cases are reminders that attacks on free expression are rarely confined to borders: governments around the world continue to use vague cybercrime laws, national security claims, and politically motivated prosecutions to silence critics, technologists, journalists, and activists.

Supporting these two—and others we’ve highlighted in our Offline project—has never been about defending only individuals. It has also been about defending the principle that writing code, sharing ideas, criticizing governments, and organizing online should not be treated as crimes. Public pressure, international solidarity, legal advocacy, and sustained campaigning can shift the political cost of repression—and, in some cases, help secure meaningful protections for those targeted.

That’s why we’re highlighting Osama’s case and will continue to work with partners including ALQST to advocate for his release. Osama Khalid, like so many human rights defenders, journalists, and internet users detained by the Saudi government, deserves to be free.

A Hackers Guide to Circumventing Internet Shutdowns

[link]

Deeplinks

May 12 2026 at 03:45 PM

Internet shutdowns are devastating for human rights. When people are disconnecte...

Internet shutdowns are devastating for human rights. When people are disconnected from the internet and digital services, it impacts all aspects of their life—from accessing essential information, to seeking medical care, or communicating with loved ones, both in that country and externally. But on January 8th, 2026, the government of Iran shut down internet communications for the entire country as a rebellion threatened to topple the authoritarian government. The government then proceeded to execute as many as 656 dissidents over the next 3 months, though the actual number could be much higher. Which is part of the point: shutdowns often precede government acts of violence.

Iran’s shutdown was hardly an isolated incident. Earlier this month, the U.S. military invaded Venezuela and kidnapped the Venezuelan president shortly after US cyber forces shut down all internet access and power grids for the capital city of Caracas. India routinely shuts off internet access in the Kashmir region, and Syria shut down internet communications as many as 73 times, most recently in 2025. Even the UK recently had a localized temporary internet shutdown. At the time of this writing there are 14 ongoing internet shutdowns worldwide.

Government shutdowns aren’t the only reason an entire region or country might lose internet access. Hurricanes, earthquakes, and wildfires can take out internet connections in many regions of the world, and will only increase as climate change ramps up. They can completely disable the communications infrastructure relied upon by victims, their families, first responders, and disaster relief efforts. Having an alternate way to communicate in such times can save lives.

One way to limit the impact of such shutdowns is to prepare in advance by setting up systems and structure for circumvention and resiliency.

To keep people connected during internet shutdowns and blackouts, communication networks must be operational before and after the disaster or shutdown. To be effective, they must be widespread so that people can get access to them reliably, and they must be usable by a majority of the community. And any viable solution must be accessible and sustainable on a community level, not just to people with vast financial resources or technical knowledge. You shouldn’t have to be a tech wizard to be able to communicate with your neighbors!

Radios

There are many ways for a community to build their own disaster resilient communications. Radios, for example, are cheap, decentralized, and resilient. Many people with moderate technical skill have set up Meshtastic repeaters. Meshtastic is a way to use a common unlicensed radio spectrum and a technology called LoRA to have peer-to-peer decentralized communications with people in your neighborhood or city. When you buy a Meshtastic device (cheap ones cost around $20) you can link it to your phone and send text messages to people in your area without ever touching the telephone network or the internet. Messages are delivered directly from person to person over public radio waves.

There is also amateur radio, also known as ham radio, which has been used in disaster communications for decades. Ham radio requires a license, but allows you to communicate farther than Meshtastic, using repeaters or even bouncing signals off the stratosphere to talk to people on the other side of the planet or even on the International Space Station. It is even possible to access the internet over ham radio.

Peer-to-peer messaging apps

Another option for internet communication during a shutdown is peer-to-peer messaging apps. One such project,called Briar, uses the Bluetooth functionality on phones to route messages from device to device until they reach their destination, even in instances where there is no internet. However, Briar faces the same problems many mesh projects do: almost nobody has the app installed and it’s difficult to use. If a mesh chat app isn’t already widely installed before an internet shutdown, it’s going to be even harder to get people to install it en masse once the shutdown starts.

A similar effort called bitchat has recently gained some attention. Bitchat is a peer-to-peer chat system that routes over Nostr, Tor, and Bluetooth. It is unfortunately tainted in many people’s eyes by being a project by former Twitter CEO Jack Dorsey, but it is open source and runs on both Android and iOS. It was used with some success in Iran during the latest internet shutdown.

Another option is Delta Chat, which uses PGP for encryption and email for routing, while still being much simpler to use than either technology. Delta Chat is highly regarded in Iran for its ability to route a message through even the tiniest sliver of email access.

Satellite internet

Satellite internet is an internet connection that uses a connection to a satellite dish to reach the internet, such as Starlink. Since there are no wires and no physical connection to infrastructure, satellite internet is harder to shut down. Satellite internet has therefore been used in many cases to circumvent internet shutdowns, with people sharing bandwidth with their neighbors. Satellites are harder for governments to shut down unilaterally. Unfortunately when the satellites are owned by tech oligarchs, such as Starlink (owned by Elon Musk), or by allied governments, the owners of those satellites may willingly shut down the network anyway.

Dreaming of a better future

Ultimately an app that is already widely being used would be the best option for shutdown resistant communication. Imagine if WhatsApp or Signal could fall back to mesh networking over bluetooth or wifi. Even better, imagine if our phones all had LoRA built in so we could have more effective mesh networks! What if our phones all had a connection to a satellite constellation run by an international coalition of hackers? We can dream of a better world and we can build it.

We can’t rely on tech oligarchs to save us, especially when these same companies and governments are the ones to sever our access to the internet and telecommunications. This is why it's important to set up communication mechanisms before a disaster happens.

As hackers, it's important for us to build these tools and infrastructure of decentralized communication, to help people learn how to use them, and to set up networks before disaster strikes. Get together with others in your city and start setting up resilient off-grid networks and building community now.

Before you download or use any of the tools mentioned in this guide check with a lawyer in your jurisdiction or country and make sure you understand what legal risks you might be taking on.

A previous version of this article appeared in the Spring 2026 issue of 2600 magazine.

Canada’s Bill C-22 Is a Repackaged Version of Last Year’s Surveillance Nightmare

[link]

Deeplinks

May 11 2026 at 08:18 PM

Last year, the Canadian government pushed Bill C-2, which would erode Canadian d...

Last year, the Canadian government pushed Bill C-2, which would erode Canadian digital rights in the name of “border security.” The bill was so bad it didn’t even make it to committee because of the backlash from the privacy community. Now, the spring’s worst sequel, Bill C-22, aka The Lawful Access Act, is trying it again.

As with most sequels, Bill C-22 makes some tweaks to problematic elements, but largely retains the same problems. The bill forces digital services, which could include telecoms, messaging apps, and more, to record and retain metadata for a full year, and expands information sharing with foreign governments, including the United States. Metadata can reveal a lot about who you communicate with, where you go, and when you do so. Expanding the collection of metadata would require companies to store even more information about their users than they already do, providing an incentive for bad actors to access that information.

Worst of all, Bill C-22 erodes the privacy of millions by providing a mechanism for the Minister of Public Safety to demand companies create a backdoor to their services to provide law enforcement access to data, as long as these mandates don’t introduce a “systemic vulnerability.” These widespread surveillance backdoors would likely facilitate even more data breaches than we see already. The bill also bans companies from even revealing the existence of these orders publicly.

The definitions of both “systemic vulnerabilities” and “encryption” are not clear enough in C-22, leaving wiggle room for the government to demand that companies circumvent encryption. And the overbroad definitions in the bill can include apps as well as operating systems. Canadian officials have made it clear they believe it’s possible to add surveillance without introducing systemic vulnerabilities, which is just not true. Surveillance of encrypted communications is fundamentally a systemic vulnerability.

This resembles what happened in the UK last year, when the government demanded that Apple implement this type of backdoor into its optional Advanced Data Protection feature, which then forced Apple to revoke the feature for its UK users instead of complying with the request. To this day, UK users still do not have access to this powerful, privacy-protective feature that provides stronger protections for data stored in iCloud. Both Meta and Apple are concerned that C-22 would give the Canadian governments similar powers, and both companies have come out against the bill. The U.S. House Judiciary and Foreign Affairs committees also sent a joint letter to Canada’s Minister of Public Safety highlighting the concern around backdoors into encrypted systems.

The dangers of these sorts of backdoors are not theoretical. In 2024, the Salt Typhoon hack took advantage of a system built by Internet Service Providers to give law enforcement access to user data. When you build these systems, hackers will come.

Canadians deserve strong privacy protections, transparency into how companies handle user data, and clear safeguards around encrypted data. Bill C-22 provides none of that, instead reaching further into the digital pockets of tech companies to build broad lawful access mechanisms.

Further reading

EFF to Fourth Circuit: Electronic Device Searches at the Border Require a Warrant

[link]

Deeplinks

May 11 2026 at 08:12 PM

EFF, along with the national ACLU, the ACLU affiliates in Maryland, North Caroli...

EFF, along with the national ACLU, the ACLU affiliates in Maryland, North Carolina, South Carolina, and Virginia, and the National Association of Criminal Defense Lawyers (NACDL) filed an amicus brief in the U.S. Court of Appeals for the Fourth Circuit urging the court to require a warrant for border searches of electronic devices under the Fourth Amendment, an argument EFF has been making in the courts and Congress for nearly a decade. The Fourth Circuit heard oral arguments on May 8. The Knight Institute at Columbia University and Reporters Committee for Freedom of the Press also filed a helpful brief focusing on the First Amendment implications of border searches of electronic devices.

The case, U.S. v. Belmonte Cardozo, involves a U.S. citizen whose cell phone was manually searched after he arrived at Dulles airport near Washington, D.C., following a trip to Bolivia. He had been on the government’s radar prior to his international trip and had been flagged for secondary inspection. Border officers found child sexual abuse material (CSAM) on his phone, and he was later arrested and criminally charged.

The district court denied the defendant’s motion to suppress the images and other data obtained from the warrantless search of his cell phone. He was ultimately convicted of child pornography and sexual exploitation of minors because he had used social media to entice minors to send him sexually explicit photos of themselves.

The number of warrantless device searches at the border and the significant invasion of privacy they represent is only increasing. In Fiscal Year 2025, U.S. Customs and Border Protection (CBP) conducted 55,318 device searches, both manual (“basic”) and forensic (“advanced”).

A manual search involves a border officer tapping or mousing around a device. A forensic search involves connecting another device to the traveler’s device and using software to extract and analyze the data to create a detailed report the device owner’s activities and communications. However, both search methods are highly privacy-invasive, as border officers can access the same data that can reveal the most personal aspects of our lives, including political affiliations, religious beliefs and practices, sexual and romantic affinities, financial status, health conditions, and family and professional associations.

In our amicus brief, we argued that the Fourth Circuit should adopt the same legal standard for both manual and forensic searches, and that standard should be a warrant supported by probable cause and issued by a neutral judge. The highly personal nature of the information found on electronic devices is why there should not be different legal standards for different methods of search, and why a judge should determine whether the government has provided credible preliminary evidence that there’s a likelihood that further evidence will be found on the device indicating wrongdoing by the specific traveler.

Moreover, we argued that “the process of getting a warrant is not unduly burdensome,” and that “getting a warrant would not impede the efficient processing of travelers. If border officers have probable cause to search a device, they may retain it and let the traveler continue on their way, then get a search warrant. Or, where there is truly no time to go to a judge, the exigent circumstances exception may apply on a case-by-case basis.”

The Fourth Circuit in prior cases only considered forensic device searches at the border. In U.S. v. Kolsuz (2018), the court held that the forensic search of the defendant’s cell phone at the border “must be considered a nonroutine border search, requiring some measure of individualized suspicion” of a transnational offense, but the court declined to decide whether the standard is only reasonable suspicion or instead a probable cause warrant. Then in U.S. v. Aigbekaen (2019), the court held that a forensic device search at the border in support of a purely domestic law enforcement investigation requires a warrant. The court also reiterated the general Kolsuz rule for a forensic border-related device search: the “Government must have individualized suspicion of an offense that bears some nexus to the border search exception's purposes of protecting national security, collecting duties, blocking the entry of unwanted persons, or disrupting efforts to export or import contraband.” Now, manual searches are before the court.

In urging the Fourth Circuit to adopt a warrant standard for both manual and forensic device searches at the border, we argued that the U.S. Supreme Court’s balancing test in Riley v. California (2014) should govern the analysis here. In that case, the Court weighed the government’s interests in warrantless and suspicionless access to cell phone data following an arrest, against an arrestee’s privacy interests in the depth and breadth of personal information stored on a cell phone. The Court concluded that the search-incident-to-arrest warrant exception does not apply, and that police need to get a warrant to search an arrestee’s phone.

The U.S. Supreme Court has recognized for a century a border search exception to the Fourth Amendment’s warrant requirement, allowing not only warrantless but also often suspicionless “routine” searches of luggage, vehicles, and other items crossing the border. The primary justification for the border search exception has been to find—in the items being searched—goods smuggled to avoid paying duties (i.e., taxes) and contraband such as drugs, weapons, and other prohibited items, thereby blocking their entry into the country.

But a traveler’s privacy interests in their suitcase and its contents are minimal compared to those in all the personal data on the person’s cell phone or laptop. And a travelers’ privacy interests in their electronic devices are at least the same as those considered in Riley. Modern devices, over a decade later, contain even more data that can reveal even more intimate details about our lives.

We hope that the Fourth Circuit will rise to the occasion and be the first circuit to fully protect travelers’ Fourth Amendment rights at the border.

EFF Stands in Solidarity With RightsCon and the Global Digital Rights Community

[link]

Deeplinks

May 11 2026 at 05:37 PM

When governments shut down spaces for dialogue, dissent, and collective organizi...

When governments shut down spaces for dialogue, dissent, and collective organizing, the damage extends far beyond a single event. The abrupt cancellation of RightsCon 2026—the world’s largest annual global digital rights conference—is not just a logistical disruption for thousands of researchers, journalists, technologists, and activists—it is part of a growing global pattern of shrinking civic space and increasing hostility toward free expression and independent civil society.

Just days before the conference was set to begin and as participants had begun to arrive in Lusaka, organizers announced that RightsCon would no longer proceed in Zambia or online after mounting political pressure and demands that would have excluded vulnerable communities and constrained discussion. The U.N.’s World Press Freedom Day, which was set to take place just prior to the conference, was scaled down in light of the events, and its press freedom prize ceremony postponed to a later date.

RightsCon has long served as one of the few truly global convenings where civil society groups, grassroots organizers, technologists, and policymakers can meet on equal footing to confront some of the most urgent human rights challenges of the digital age—from censorship and surveillance to internet shutdowns, platform accountability, and the safety of marginalized communities online. EFF has had a presence at RightsCon since its inception in 2011, and had planned to meet with and learn from international partners and present our work during several sessions in Lusaka.

The cancellation is especially devastating because of what RightsCon represents. For many advocates—particularly those from the global majority—it is not merely another conference. It is a rare opportunity to build solidarity across borders, form lasting partnerships, learn from other regions’ experiences, secure funding and support for local work, and ensure that the people most impacted by digital repression have a seat at the table. Holding the event in southern Africa carried particular significance, promising to elevate regional voices and strengthen local digital rights networks.

What happened in Zambia sends a chilling message. According to organizers and multiple reports, the pressure surrounding the event included Chinese government demands to exclude Taiwanese participants and moderate discussions around politically sensitive topics. At a moment when governments around the world are increasingly restricting protest, targeting journalists, cutting funds for human rights work, banning young people from online communities, censoring speech, and criminalizing civil society activity, the cancellation of RightsCon reflects the broader erosion of democratic space online and offline.

Organizations from the digital rights community have spoken out forcefully against the government’s cancellation of the conference, making clear that these attacks on civic participation will not pass unnoticed. Access Now described the decision as evidence of “the far reach of transnational repression targeting civil society.” Index on Censorship’s response warned that the move represents a dangerous escalation in attempts to suppress open dialogue, while IFEX rightly described the cancellation as a blow not just to one conference, but to freedom of expression and assembly everywhere.

We are also heartened to see statements from members of the international community—including Tabani Moyo, who spoke about the impact on the southern African community, and Taiwanese participant Shin Yang, who emphasized the importance of preserving spaces where marginalized communities can safely organize and speak—underscoring that attempts to silence civil society only reinforce the importance of defending open, global spaces for organizing and debate.

Even as this cancellation represents a serious setback, it is important to remember that the digital rights community has always adapted under pressure. Around the world, advocates continue to organize in increasingly difficult environments, finding new ways to connect, collaborate, and resist censorship and repression. Upcoming events like the Global Gathering and FIFAfrica—both of which EFF plans to attend—will bring together members of the community to tackle tough issues. And in the meantime, groups from all over the world are working together to incorporate global perspectives into platform regulations, oppose age verification laws, protect against surveillance, and fight internet shutdowns, among many other efforts.

RightsCon itself emerged from a recognition that defending human rights in the digital age requires international solidarity—and that need has not disappeared.

The conversations that were supposed to happen in Lusaka will continue elsewhere: in community spaces, online gatherings, encrypted chats, and future convenings yet to come. Governments may close venues, restrict participation, or attempt to narrow the boundaries of acceptable speech, but they cannot erase the global movement working to defend a free and open internet.

RightsCon will not go on in Zambia, but we remain heartened and inspired by the strength of the global digital rights community, stand with them in solidarity, and look forward to seeing our allies at the next RightsCon and other upcoming events.

Congress Narrowed the GUARD Act, But Serious Problems Remain

[link]

Deeplinks

May 08 2026 at 11:24 PM

Following criticism, lawmakers have narrowed the GUARD Act, a bill aimed at rest...

Following criticism, lawmakers have narrowed the GUARD Act, a bill aimed at restricting minors’ access to certain AI systems. The earlier version could have applied broadly to nearly every AI-powered chatbot or search tool. The amended bill focuses more narrowly on so-called “AI companions”—conversational systems designed to simulate emotional or interpersonal interactions with users.

That change does address some of the broadest concerns raised about the original proposal, though some questions about the bill’s reach remain. Bottom line: the revised bill still creates serious problems for privacy, online speech, and parental choice.

Tell Congress: oppose the guard act

The new GUARD Act still requires companies offering AI companions to implement burdensome age-verification systems tied to users’ real-world identities. Even parents who specifically want their teenagers to use these systems would still face significant hurdles. A family might decide that a conversational AI tool helps an isolated teenager practice social interaction, or engage in harmless creative roleplay. A parent deployed in the military might set up a persistent AI storyteller for a younger child. Under the revised bill, those users could still face mandatory age checks tied to sensitive personal or financial information before they or their children can use these services.

The revised bill also leaves important definitions unclear while sharply increasing penalties for developers and companies that get those judgments wrong. Congress narrowed the GUARD Act. But it is still trying to solve a complicated social problem with vague legal standards, heavy liability, and privacy-invasive verification systems.

Intrusive Age-Verification Remains In The Bill

The revised GUARD Act still requires companies offering AI companions to verify that users are adults through a “reasonable age verification” system. The bill allows a broader set of verification methods than the earlier version, but they are still tied to a user’s real-world identity—such as financial records, or age-verified accounts for a mobile operating system or app store.

That approach still raises serious privacy and access concerns. Millions of Americans do not have current government ID, accounts at major banks, or stable access to the kinds of digital identity systems the bill contemplates. Even for those who do, requiring identity-linked verification to access online speech tools creates real risks for privacy, anonymity, and data security. Many people are rightly creeped out by age-verification systems, and may simply forgo using these services rather than compromise their privacy and security.

The revised definition of “AI companion” is also narrower than before, but it’s unclear at the margins. The bill now focuses on systems that “engage in interactions involving emotional disclosures” from the user, or present a “persistent identity, persona or character.”

EFF appreciates that the authors recognized that the prior definition could reach a variety of AI systems that are not chatbots, including internet search engines. But the narrowed definition could be read to also apply to a variety of chat tools that are not AI companions. For example, many modern online conversational systems increasingly recognize and respond to users’ emotions. Customer service systems, including completely human-powered ones that existed long before AI chatbots, have long been designed to recognize frustration and respond empathetically. As conversational AI becomes more emotionally responsive, a customer service chatbot’s efforts to empathize may sweep it within the bill’s definition.

Bigger Penalties, Bigger Incentives To Restrict Access

The revised bill also sharply increases penalties. Instead of $100,000 per violation, companies—including small developers—can face fines of up to $250,000 per violation, enforced by both federal and state officials.

That kind of liability creates incentives to over-restrict access, especially for minors. Smaller developers, in particular, may decide it is safer to block younger users entirely, disable conversational features, or avoid developing certain tools at all, rather than risk severe penalties under vague standards.

The concerns driving this bill are real. Some AI systems have engaged in troubling interactions with vulnerable users, including minors. But the right answer to that is targeted enforcement against bad actors, and privacy laws that protect us all. The revised GUARD Act instead responds with a privacy-invasive system that burdens the right to speak, read, and interact online.

Congress did improve this bill, but EFF’s core speech, privacy, and security issues remain.

Free Signal Guide

[link]

Deeplinks

May 08 2026 at 05:23 PM

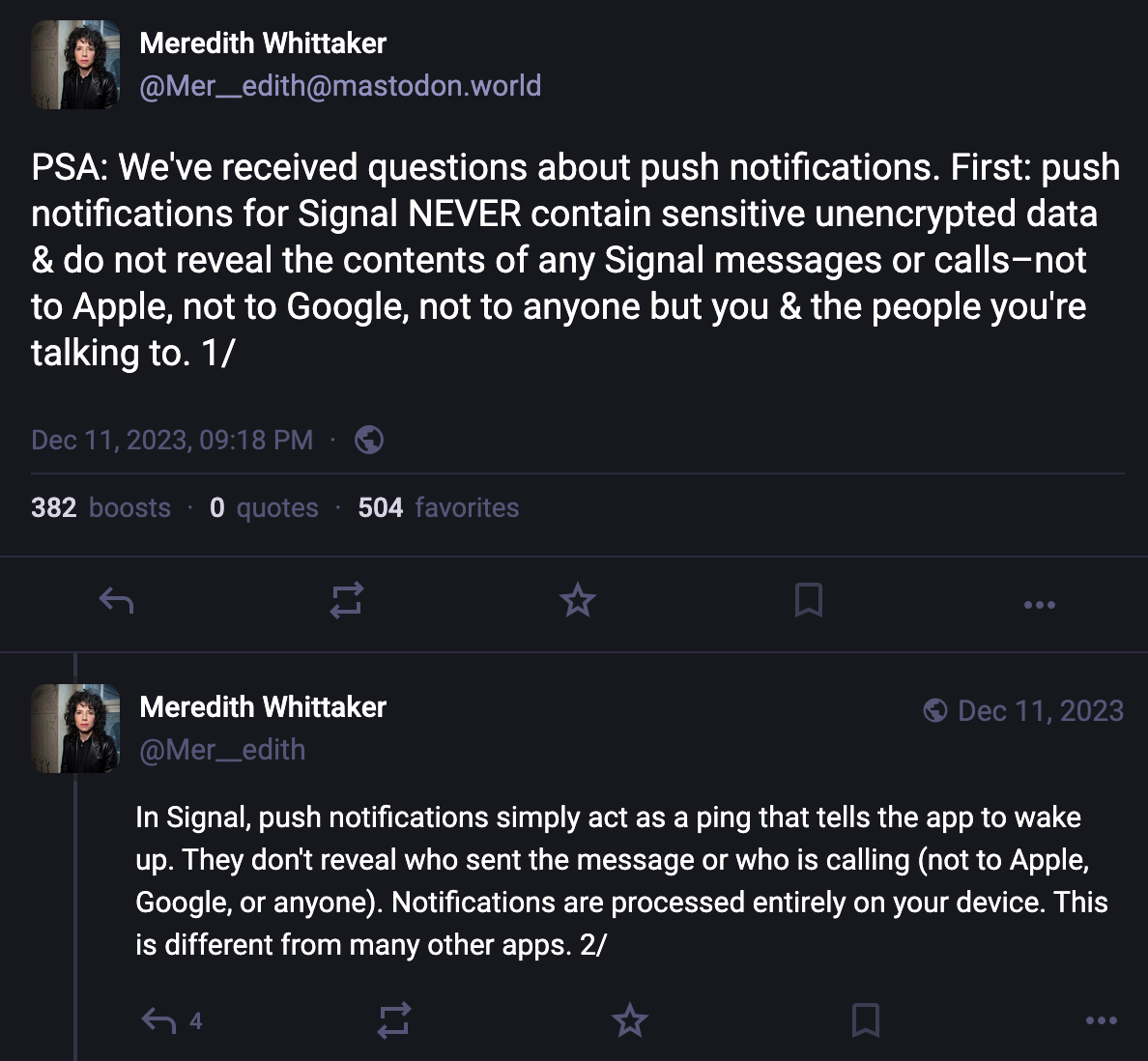

EFF friend Guy Kawasaki* has written a book: Everybody Has Something to Hide: Wh...

EFF friend Guy Kawasaki* has written a book: Everybody Has Something to Hide: Why and How to Use Signal to Preserve Your Privacy, Security, and Well-Being. This guide is now available in Spanish and English as an ebook in the EPUB format that you can download here. Take a look and consider sharing it with anyone who you know who uses (or should use) Signal.

And don't forget: EFF has two short guides on using Signal on our Surveillance Self-Defense site. An intro How to Use Signal guide, and a guide on Managing Signal Groups.

Everybody Has Something to Hide: Why and How to Use Signal to Preserve Your Privacy, Security, and Well-Being courtesy of Guy Kawasaki.

*Guy Kawasaki is an EFF donor.

Milestone 1.0.0 Release of APK Downloader `apkeep` Powers Research on Android Apps

[link]

Deeplinks

May 06 2026 at 10:44 PM

Last week, we released apkeep version 1.0.0, the latest edition of our command-l...

Last week, we released apkeep version 1.0.0, the latest edition of our command-line Android package downloading software. Rather than indicating major changes for the project, this milestone instead signifies arriving at a relatively stable and mature place after gradual iteration on the project over the course of over four years.

What’s New in 1.0.0

We do have a few fresh features we’ve packed into this latest release, though—all focused on the Google Play Store:

- You can now download a dex metadata file associated with an app containing a Cloud Profile, which provides information on app performance based on real usage.

- You can now provide a token generated by the Aurora Store’s dispenser to log in anonymously for app downloads.

- Users can specify their own device profiles when downloading apps from Google Play, which the store uses to deliver the app variant which works for your particular device specifications.

- We’ve also fixed an authentication bug introduced by the Play Store API.

In addition to the various Linux, Windows, and Android environments we support, we’re also happy to announce that since the last release in October we’ve been included in Homebrew for macOS users!

How Researchers Use apkeep to Understand the Android App Landscape

Researchers and users contributed most of the features of this release, including downloading dex metadata containing Google’s Cloud Profiles. This feature helps them use the tool in their own research of highlighting how these Android compilation profiles can be a vital source of information for evaluating dynamic testing. Numerous other projects have cited apkeep usage in their own workflows. For example, Exodus Privacy uses it to power the εxodus tool’s downloads when they monitor the privacy properties of apps. Various research teams have noted their own use of the tool in whitepapers, including one team who used the tool to download 21,154 apps in a widespread study of Android evasive malware. We are proud to provide a reliable tool in the toolbox they use to power their work.

What’s in Store for apkeep?

Our goals with apkeep have remained constant: provide a reliable, fast, and safe way to download apps from multiple app providers, not just the Google Play Store. While we’ve focused on it as the major Android app provider of choice across much of the world, we’ve expanded support to other stores as well, such as F-Droid for downloading open source apps. We’d like to continue broadening apkeep’s list of supported providers, to make it easy to do comparative analysis of apps provided in different contexts. For this, we’d love your contributions.

How You Can Help

If you’re using apkeep as part of your own toolbox (whether using it to do malware analysis, auditing apps, or simply using it as an app archiving tool), let us know! And if you like what we do, please consider donating to EFF to support our work.

👎 California's Terrible, No Good, Very Bad Social Media Ban | EFFector 38.9

[link]

Deeplinks

May 06 2026 at 04:11 PM

We'd all like the internet to be a better place—for kids and adults alike. But i...

We'd all like the internet to be a better place—for kids and adults alike. But in the name of online safety, governments around the world are racing to impose a dangerous new system of control. Are age gates the silver bullet to the internet's problems they're being promoted as? Or are we being sold a bill of goods? We're answering this question and more in our latest EFFector newsletter.

For over 35 years, EFFector has been your guide to understanding the intersection of technology, civil liberties, and the law. This latest issue covers an attack on VPNs in Utah, a livestream on how to disenshittify the internet, and California's proposed social media ban that could set a dangerous new precedent for online censorship.

Prefer to listen in? EFFector is now available on all major podcast platforms. This time, we're having a conversation with EFF Legislative Analyst Molly Buckley on why social media bans can't sidestep the U.S. constitution. You can find the episode and subscribe on your podcast platform of choice:

Privacy info.

This embed will serve content from simplecast.com

Want to help push back on these misguided regulations? Sign up for EFF's EFFector newsletter for updates, ways to take action, and new merch drops. You can also fuel the fight for privacy and free speech online when you support EFF today!

The SECURE Data Act is Not a Serious Piece of Privacy Legislation

[link]

Deeplinks

May 06 2026 at 02:38 PM

The federal SECURE Data Act is not a serious consumer privacy bill, and its prov...

The federal SECURE Data Act is not a serious consumer privacy bill, and its provisions—if enacted—would be a retreat from already insufficient state protections.

Republicans on the House Energy and Commerce Committee released a draft of the bill late last month without bipartisan support. The bill is weaker than congressional proposals in prior years, as well as most of the 21 state consumer privacy laws already on the books.

The bill could wipe out hundreds of state privacy protections.

Most troubling for EFF: the bill would preempt dozens, if not hundreds, of state laws that regulate related topics, and it would not allow consumers to sue to protect their own rights (commonly called a private right of action). And it comes nowhere close to banning online behavioral advertising—a practice that fuels technology companies’ always increasing hunt for personal data.

The bill also suffers from many other flaws including weak opt-out defaults, inadequate data minimization requirements, and large definitional loopholes for companies.

Key Provisions

The bill would give consumers some rights to take action to control their personal data— like access, correction, deletion, and limited portability. These rights have become standard in all data privacy proposals in recent years.

The bill would also require companies to obtain your consent before processing your sensitive data, or using any of your personal data for a previously undisclosed purpose. Absent your consent, a company couldn’t do these things.

Further, the bill would allow you to opt out of (1) targeted third-party advertising, (2) the sale of your personal data, and (3) profiling of you that has a legal, healthcare, housing, or employment effect. Unfortunately, a company could keep doing these invasive things to you, unless you opted out.

The bill would also require data brokers that make at least 50 percent of their profits from the sale of personal data to register in a public database maintained by the Federal Trade Commission (FTC).

Preemption of Too Many State Laws

Federal privacy laws should allow states to build ever stronger rights on top of the federal floor. Many federal privacy laws allow this, including the Health Insurance Portability and Accountability Act, the Video Privacy Protection Act, and the Electronic Communications Privacy Act.

The SECURE Data Act would not do that. Instead, it would wipe out dozens, if not hundreds, of existing state privacy protections. Section 15 of the bill would preempt any “law, rule, regulation, requirement, standard, or other provision [that] relates to the provisions of this Act.” This would kill the 21 state consumer privacy laws passed in the past few years. These state bills aren’t strong enough, but they are still better than this federal proposal. For example, California maintains a data broker deletion tool and requires companies to comply with automatic opt-out signals—including one that is built into EFF’s Privacy Badger.

Because the SECURE Data Act has provisions that relate to data privacy and security, it could preempt all 50 state data breach laws and many others. It could also preempt state laws related to specific pieces of sensitive data, like bans on the sale of biometric or location information. Some states like California have constitutional provisions that protect an individual’s right to privacy, which can be enforced against companies. That constitutional provision, as well as state privacy torts, could also be in danger if this bill passed.

No Private Enforcement, A New Cure Period, and Vague Security Powers

Strong consumer privacy laws should allow consumers to take companies to court to defend their own rights. This is essential because regulators do not have the resources to catch every violation, and federal consumer enforcement agencies have been gutted during the current administration.

The SECURE Data Act does not have a private right of action. The FTC, along with state attorneys general, have primary enforcement authority. The law also gives companies 45 days to “cure” any violation with no penalty after they are caught.

Moreover, Section 8 of the bill creates a vaguely defined self-regulatory scheme in which companies can apply to be audited by an “independent organization” that will apply a “code of conduct.” Following this code of conduct would give companies a presumption that they are complying with the law. This provision is an implicit acknowledgement that the bill does not provide regulators with any new resources to enforce new protections.

Section 9 of the bill would give the Secretary of Commerce broad power to “take any action necessary and appropriate to support the international flow of personal data,” including assessing “security interests of the United States.” The scope of this amorphous provision is unclear, but it likely does not belong in a consumer protection bill.

Weak Privacy Defaults

Your online privacy should not depend on whether you have the time, patience, and knowledge to navigate a website and turn off invasive tracking. Good privacy laws build in data minimization requirements—meaning there should be a default standard that prevents companies from processing your data for purposes that are not needed to provide you with the service you asked for.

The SECURE Data Act puts the burden on you to opt out of invasive company practices, like targeted third-party advertising, the sale of your personal data, and profiling. The bill at least requires companies to obtain your consent before processing your sensitive data (like selling your precise location). These consent requirements, however, are often an invitation for companies to trick you into clicking a button to give away your rights in hard-to-read policies. Indeed, few people would knowingly agree to let a company sell their personal data to a broker who turns around and sells it to the government.

Section 3 of the bill uses the term “data minimization,” but it is done in name only. The provision does not limit a company’s processing of data to only what is necessary to provide the customer with the good or service they asked for. Instead, the provision limits processing of data to only what a company “disclosed to the customer”—meaning if it is in the confusing privacy policy that nobody reads, it is okay.

And the bill would not even allow you to restrict certain uses of your data. As companies seek more data for AI systems, many internet users do not want their private personal data to be used to train those models. However, the bill makes clear that “nothing in this Act may be construed to restrict” a company from collecting, using, or retaining your data to “develop” or “improve” a new technology.

Other Flawed Definitions and Loopholes

The bill has numerous loopholes that technology companies would exploit if the bill were to become law. Below is just a sampling:

- Government contractors: Under Section 13(b)(2), government contractors are exempt from the bill, which could be wrongly interpreted to exempt certain data brokers from sale restrictions when those sales are made to the government. This type of exemption could benefit surveillance companies like Clearview AI, which previously argued it was exempt from Illinois’ strict biometric law using a similar contractor exception. This is likely not the authors’ intention, since the definition of sale includes those made “to a government entity.”

Sale definition: The definition in Section 16(28) is defined too narrowly. A sale should mean any exchange for monetary “or other valuable” consideration, as in some other privacy laws. - Biometric information definition: The definition in Section 16(4) excludes data generated from a photo or video, and the definition excludes face scans not meant to “identify a specific individual.” This could be wrongly interpreted to allow biometric identification from security camera footage, or biometric use for sentiment or demographic analysis.

- Personal data definition: The definition in Section 16(21) exempts “de-identified data” from the definition of personal data, which could allow companies to do anything with de-identified data because that data is not protected by the law. The problem with de-identified data is that many times it is not.

- Deletion requests: With regard to data that a company obtained from a third-party, Section 2(d)(5) would treat a consumer’s deletion request merely as an opt-out request. And even if a customer requested deletion, a company might be able to retain the data for research purposes under section 11(a)(9)(A).

- Profiling definition: Under the definition in Section 16(25), companies could profile so long as the profiling is not “solely automated.” The flimsiest human review would exempt highly automated profiling.

Congress is long overdue to enact a strong comprehensive consumer data privacy law, and we have sketched what it should look like. But the SECURE Data Act is woefully inadequate. In fact, it would cause even more corporate surveillance of our personal information, by wiping out state laws that are more protective than this federal bill. Even worse, this bill would block state legislatures from protecting their residents from the privacy threats of tomorrow that are unforeseeable today.

EFF and 18 Organizations Urge UK Policymakers to Prioritize Addressing the Roots of Online Harm

[link]

Deeplinks

May 05 2026 at 10:41 AM

EFF joins 18 organizations in writing a letter to UK policymakers urging them to...

EFF joins 18 organizations in writing a letter to UK policymakers urging them to address the root causes of online harm—rather than undermining the open web through blunt restrictions.

The coalition, which includes Mozilla, Tor Project, and Open Rights Group, warns that proposed measures following the passage of the Children’s Wellbeing and Schools Bill risk fundamentally reshaping the internet in harmful ways. Chief among these proposals are sweeping age-gating requirements and access restrictions that would apply not only to young people, but effectively to all users.

While framed as efforts to protect children online, these policies rely heavily on age assurance technologies that are either inaccurate, privacy-invasive, or both. As the letter notes, mandating such systems across a wide range of services—from social media and video games to VPNs and even basic websites—would force users to verify their identity simply to access the web. This creates serious risks, including expanded surveillance, data breaches, and the erosion of anonymity.

Beyond privacy concerns, the signatories argue that these measures threaten the core architecture of the open internet. Age-gating at scale could fragment the web into a patchwork of restricted jurisdictions, limit access to information, and entrench the dominance of powerful gatekeepers like app stores and platform ecosystems. In doing so, policymakers risk weakening the very qualities—interoperability, accessibility, and openness—that have made the internet a global public resource.

The letter also emphasizes what’s missing from the current policy approach: meaningful efforts to address the underlying drivers of online harm. Many digital platforms are designed to maximize engagement and profit through pervasive data collection and targeted advertising, often at the expense of user safety and autonomy. Rather than imposing access bans, the coalition calls on UK policymakers to hold companies accountable for these systemic practices and to prioritize user rights by design.

Importantly, the signatories highlight that the internet remains a vital space for young people: offering access to information, support networks, and opportunities for expression that may not exist offline. Policies that restrict access risk cutting off these lifelines without meaningfully reducing harm.

The message is clear: protecting users online requires more than heavy-handed restrictions. It demands thoughtful, rights-respecting policies that tackle the business models and design choices driving harm, while preserving the open, global nature of the web.

Shut Down Turnkey Totalitarianism

[link]

Deeplinks

May 05 2026 at 07:11 AM

William Binney, the NSA surveillance architect-turned-whistleblower, called it t...

William Binney, the NSA surveillance architect-turned-whistleblower, called it the "turnkey totalitarian state." Whoever sits in power gains access to a boundless surveillance empire that scorns privacy and crushes dissent. Politicians will come and go, but you can help us claw the tools of oppression out of government hands.

Become a Monthly Sustaining Donor

We must stand strong to uphold your privacy and free expression as democratic principles. With members around the world, EFF is empowered to use its trusted voice and formidable advocacy to protect your rights online. Whether giving monthly or one-time donations, members have helped EFF:

-

Sue to stop warrantless searches of Automated License Plate Reader (ALPR) records, which reveal millions of drivers’ private habits, movements, and associations.

-

Launch Rayhunter, an open source tool that empowers you to help search out cell-site simulators capable of tracking the movements of protestors, journalists, and more.

-

Help journalists see through the spin of "copaganda" by breaking down how policing technology companies often market their tools with misleading claims with our Selling Safety report.

Right now, U.S. Congress is on the edge of renewing the international mass spying program known as Section 702, affecting millions. EFF is rallying to cut through the politics and give ordinary people a chance to stop this oppressive surveillance. It’s only possible with help from supporters like you, so join EFF today.

The New EFF Member Gear

Get this year’s new member t-shirt when you join EFF. Aptly titled "Claw Back," the design features an orange boy swatting at the street-level surveillance equipment multiplying in our communities. You might empathize with him, but there’s a better way. Let’s end the law enforcement contracts, harmful practices, and twisted logic that enable mass spying in the first place.

You can also get brand new set of eleven soft and supple polyglot puffy stickers as a token of thanks. Whether you're a kid or a kid at heart, these nostalgic stickers are perfect for digital devices, lunchboxes, and notebooks alike. Our little Ghostie protects privacy in six languages: Arabic, English, Japanese, Persian, Russian, and Spanish.

And for a limited time, get a Privacy Badger Crewneck sweater to help you browse the web with confidence. The embroidered Privacy Badger mascot appears above Traditional Chinese for "privacy” because human rights are universal. Millions of people around the world use Privacy Badger, EFF's free browser extention that blocks hidden trackers that twist your web browsing into a commodity for Big Tech, advertisers, scammers, and data brokers.

Privacy is a human right because it gives you a fundamental measure of security and freedom. We owe it to ourselves to fight the mass surveillance used to control and intimidate people. Let’s do this. Join EFF today with a monthly donation or one-time donation and help claw back your privacy.

____________________

EFF is a member-supported U.S. 501(c)(3) organization. We've received top ratings from the nonprofit watchdog Charity Navigator since 2013! Your donation is tax-deductible as allowed by law.

EFF Submission to UK Consultation on Digital ID

[link]

Deeplinks

May 04 2026 at 06:35 PM

Last September, the United Kingdom’s Prime Minister Keir Starmer announced plans...

Last September, the United Kingdom’s Prime Minister Keir Starmer announced plans to introduce a new digital ID scheme in the country. The scheme aims to make it easier for people to prove their identities by creating a virtual ID on personal devices with information like names, date of birth, nationality or residency status, and a photo to verify their right to live and work in the country.

Since then, EFF has joined UK-based civil society organizations in urging the government to reconsider this proposal. In one joint letter from December, ahead of Parliament’s debate around a petition signed by 2.9 million people calling for an end to the government’s plans to roll out a national digital ID, EFF and 12 other civil society organizations wrote to politicians in the country urging MPs to reject the Labour government’s proposal.

Nevertheless, politicians have continued to explore ways to build out a digital ID system in the country, often fluctuating between different ideas and conceptualisations for such a scheme. In their search for clarity, the government launched a consultation, ‘Making public services work for you with your digital identity,’ seeking views on a proposed national digital ID system in the UK.

EFF submitted comments to this consultation, focusing on six interconnected issues:

- Mission creep

- Infringements on privacy rights

- Serious security risks

- Reliance on inaccurate and unproven technologies

- Discrimination and exclusion

- The deepening of entrenched power imbalances between the state and the public.

Even the strongest recommended safeguards cannot resolve these issues, and the fundamental core problem that a mandatory digital ID scheme that shifts power dramatically away from individuals and toward the state. They are pursued as a technological solution to offline problems but instead allow the state to determine what you can access, not just verify who you are, by functioning as a key to opening—or closing—doors to essential services and experiences.

No one should be coerced—technically or socially—into a digital system in order to participate fully in public life. It is essential that the UK government listen to people in the country and say no to digital ID.

Read our submission in full here.

Getting Digital Fairness Right: EFF's Recommendations for the EU's Digital Fairness Act

[link]

Deeplinks

May 04 2026 at 03:33 PM

Digital Fairness in the EU

The next few years will be decisive for EU digital po...

Digital Fairness in the EU

The next few years will be decisive for EU digital policymaking. With major laws like the Digital Services Act, the Digital Markets Act, and the AI Act now in place, the EU is entering an enforcement era that will show whether these rules are rights-respecting or drift toward overreach and corporate control. With the proposed EU’s Digital Fairness Act (DFA), the Commission is now turning to increasingly visible risks for users, such as dark patterns and exploitative personalization. Its “Digital Fairness Fitness Check” makes clear that existing consumer rules need updating to reflect how digital markets operate today.

But not all proposed solutions point in the right direction. Regulators are already flirting with measures that rely on expanded surveillance, such as age verification mandates—surface-level fixes that risk undermining fundamental rights while offering little more than a false sense of protection.

For EFF, digital fairness means addressing the root causes of harm, not requiring platforms to exert more control over their users. It means safeguarding privacy, freedom of expression, and the rights of users and developers.

If the DFA is to make a real difference, it must tackle structural imbalances. Lawmakers should focus on two interlocking principles. First, prioritize privacy. Reforms should address harms driven by surveillance-based business models, alongside deceptive design practices that impair informed choices. Second, strengthen user sovereignty, which is also a necessary precondition for European digital sovereignty more broadly. Strengthening user sovereignty means taking measures that address user lock-in, coercive contract terms, and manipulative defaults that limit users’ ability to freely choose how they use digital products and services.

Together, these principles would support the EU’s objectives of consistent consumer protection, fair markets, and a more coherent legal framework. If implemented properly, the EU could address power imbalances and build trust in Europe’s digital economy.

Ban Dark Patterns

Dark patterns are practices that impair users’ ability to make informed and autonomous decisions. Many companies deploy these tactics through interface design to steer choices and influence behavior. Their impact goes beyond poor consumer decisions. Dark patterns push users to share personal data they would not otherwise disclose and undermine autonomy by making alternatives harder to access.

The DFA should address this by clearly prohibiting misleading interfaces that distort user choice in commercial contexts. While the Digital Services Act introduced a definition, it only partially bans such practices and leaves gaps across existing consumer law rules. The DFA should close these gaps by, at the very least, introducing explicit prohibitions and clearer enforcement rules, without resorting to design mandates.

Tackle Commercial Surveillance

At the core of digital unfairness lies the pervasive collection and use of personal data. Surveillance and profiling drive many of the harms regulators are trying to address, from dark patterns to exploitative personalization. The DFA should tackle these incentives directly by reducing reliance on surveillance-based business models. These practices are fundamentally incompatible with privacy and fairness, and they distort digital markets by rewarding data exploitation rather than quality of service. At a minimum, the DFA should address unfair profiling and surveillance advertising by strengthening privacy rights and banning pay-for-privacy schemes. Users should not have to trade their data or pay extra to avoid being tracked. Accordingly, the DFA should support the recognition of automated privacy signals by web browsers and mobile operating systems, which give users a better way to reject tracking and exercise their rights. Practices that override such signals through banners or interface design should be considered unfair.